LIHUE — Artificial intelligence is helping scientists sort through more than 19 years’ worth of humpback whale songs at the Pacific Islands Fisheries Science Center in Honolulu.

And the result could be a better understanding of population numbers and travel patterns, perhaps answering the question: Where are the whales?

Two projects joined forces when National Oceanic and Atmospheric Administration scientist Ann Allen connected with Google’s Artificial Intelligence group, on the hunt for a way to analyze 170,000 hours of acoustic data.

Using their machine learning techniques for conservation was attractive enough of an idea that Google jumped on board and a plan was made to teach a computer how to identify humpback whale songs.

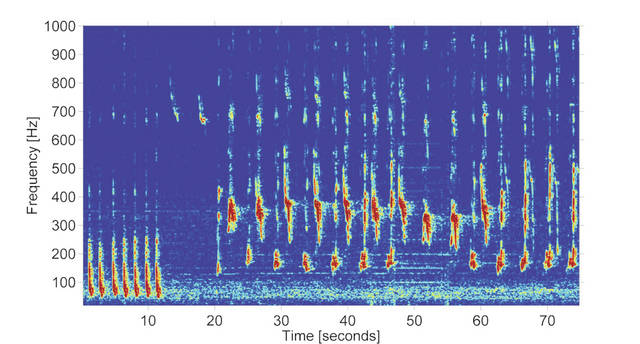

“As a researcher if I was looking at a spectrogram of whale calls, even if I’d never heard that specific song before, I could tell it was a humpback whale,” Allen said. “So, give the computer enough examples of humpback whale songs” and it can identify the songs.

Machine learning technology teaches a computer how to learn instead of giving it step-by-step instructions, teaching it to go through the process a human brain uses.

The idea sprang out of a conversation between Allen and her father after she had been hired to work on acoustic data at Pacific Islands Fisheries Science Center’s Cetacean Research Program.

She told him about her problem — too much data. He suggested a solution she had never considered.

“He said, ‘What about getting Shazam or Google to help,’” Allen said.

At first, Allen thought whale and human songs were too much of an apples-and-oranges scenario for something like Shazam — an app that identifies human songs from recordings.

But after further thought, she decided the idea just might work and contacted a friend who worked at Google. That got the ball rolling, and now computers at Google Artificial Intelligence are identifying humpback whale songs from high-frequency acoustic recording packages (HARPs) across the Pacific Ocean.

“It’s doing a pretty good job for humpback whales,” Allen said. “We’re still working on some sites that I didn’t provide much training data for.”

Training is a multi-stepped process that started with Allen. She gave Google a hard drive with all of their underwater recordings and a small set of ‘training data’ with humpback calls individually marked by hand, which were used to teach the computer to recognize humpback whale songs — with challenges like ships and other ocean noise complicating the learning process.

“Ships look like song and even some of the recording equipment looks like song, so it’s taken several rounds of training,” Allen said.

Off the Kona Coast, though, NOAA has a HARP location that’s been monitored for the past 10 years. AI is doing well at identifying in that area and NOAA is now actively collecting data to compare the Kona site to a HARP site in the Northwest Hawaiian Islands.

The purpose, Allen said, is “to see if the reduction in numbers is them moving up to the Northwest Hawaiian Island or if it’s an actual decrease in numbers visiting.”

Looking long-term, Allen said the idea is to develop a new tool to get data from new sites, new songs and new information.

“An integrated tool would be revolutionary for our field,” Allen said. “It’s a very powerful tool and there’s so much we can learn from it.”